Do You Take Trade Show Exhibit Infrastructure For Granted?

Think about this for a moment; do you assume that Information Technology (IT) and Cloud based data centers along with their associated Data Infrastructure supporting various applications will be accessible when needed. Likewise, when you go to a trade show, conference, symposium, user group or another conclave is it assumed that the trade show, exposition (expo), exhibits, booths, stands or demo areas will be ready, waiting and accessible?

Fire Disrupts Flash Memory Summit Conference Exhibits

This past week at the Flash Memory Summit (FMS) conference trade show event in Santa Clara California, what normally would be taken for granted (e.g. expo hall and exhibits) were disrupted. The disruption (more here and here) was caused by an early morning fire in one of the exhibitor’s booths (stand) in the expo hall (view some photos here via Toms Hardware.com).

Fortunately, nobody was hurt, at least physically, and damage (physically) appears to have been isolated.

However while the key notes, panels, and other presentations did take place as part of the show must go on, the popular exhibit expo hall did not. Granted for some people who only attend conferences or seminar events for the presentation content, lack of the exhibition hall simply meant no free giveaways.

On the other hand, for those who attend events like FMS mainly for the exhibition hall experience, the show did not go on, perhaps resulting in a trip in vain (e.g. how you might be able to recoup some travel costs in some scenarios) for some people. For example, those who were attending to meet with a particular vendor, see a product technology, conduct some business or other meetings, do an interview, video, podcast, take some photos, or simply get some free stuff were disrupted.

Likewise those behind the scenes, from conference organizers, event staff not to mention the vendor’s sponsors who put resources (time, money, people, and equipment) into an exhibit were disrupted. Vendors were still able to issue their press releases and conduct their presentations, keynotes, panel discussions, however what about the lack of the expo.

Do We Take Data and Event Infrastructures For Granted

This begs the question of if trade show exhibits still have value, or can an event function without one?

I am not sure as some events can and do stand on their merit with presentation content being the primary focus, others the expo is the draw, many are hybrid with a mix of both.

A question and point of this piece is that how many people take conferences in general, and exhibits along with their associated Infrastructure for granted?

How many know or understand the amount of time, money, people resources and various tradecraft skills across different disciplines go into event planning, staging, coordination, the execution, so they occur?

This also ties into the theme of how many people only think and assume that IT data centers and clouds along with their data Infrastructure resources, services are available supporting applications along with data access to give information?

The same holds true for your telephone (plain old telephone system [POTS] and cellular or mobile) service, gas, electric, sewer, water, waste (garbage), traditional or network based television, internet provider, highways, railroads, airports, the list goes on.

Where To Learn More

Learn more about related technology, trends, tools, techniques, and tips with the following links.

What This All Means

The good news is that nobody physically was injured this past week.

Granted some may have incurred emotional, monetary or public and marketing related injuries, however, those can be dealt with over time.

My point is, do we assume too much (perhaps rightfully so) that events, exhibits and other trade show conference related items are always on, always available, accessible open on time? With IT data center and clouds, you have different expectation levels of access, availability, durability, survivability for a given cost to meet service expectations.

Next time you attend a webinar, seminar, conference, symposium, trade show, presentation, exhibit or expo, take a moment and look around at what you see, as well as what you do not see. Having been in involved in and around conferences, conventions, seminars, expos across different industries, both behind the scenes as well as on the public side, I do not take these events for granted.

Knowing what goes into the planning, coordination, scheduling, promotion, logistics, all the things behind the scenes, next time you go to an event, look around. What you can see that perhaps are not meant to be seen as part of their Infrastructure. In event venue exhibit halls as well as data centers, there are those things you see such as data infrastructure resources including racks of servers, storage, I/O networking, monitors, displays, work areas, heating ventilation air conditioning (HVAC) along with those you might not see.

What you might not see and take for granted are the smoke and fire detection along with suppression systems which at the Santa Clara convention center appeared to have done their job. There are also the electrical power and distribution systems; perhaps battery backed uninterruptible power systems (UPS) along with standby alternate generator power.

How about a big round of applause, thank you, Atta boy and Atta girl, acknowledgment and other signs of appreciation for all those involved behind the scenes who do the planning, preparation, coordination, setup, tear down and in person what you see at events.

Thank you to all who have, and continue to enable trade shows, conferences, seminars, exhibits, road shows among other events to take place, after all, the show must go on. In other words, like IT and cloud Data Centers, do you take trade show exhibit infrastructures for granted?

Ok, nuff said, for now.

Greg Schulz – Multi-year Microsoft MVP Cloud and Data Center Management, VMware vExpert (and vSAN). Author of Software Defined Data Infrastructure Essentials (CRC Press), as well as Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio.

Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2023 Server StorageIO(R) and UnlimitedIO. All Rights Reserved.

NVMe Wont Replace Flash By Itself They Complement Each Other

Updated 2/2/2018

NVMe Wont Replace Flash By Itself They Complement Each Other

>

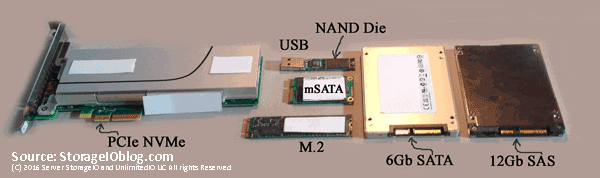

Various Solid State Devices (SSD) including NVMe, SAS, SATA, USB, M.2

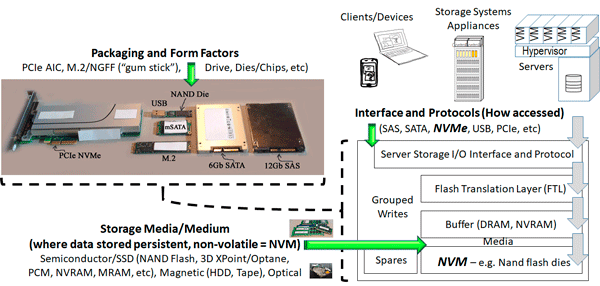

There has been some recent industry marketing buzz generated by a startup to get some attention by claiming via a study sponsored by including the startup that Non-Volatile Memory (NVM) Express (NVMe) will replace flash storage. Granted, many IT customers as well as vendors are still confused by NVMe thinking it is a storage medium as opposed to an interface used for accessing fast storage devices such as nand flash among other solid state devices (SSDs). Part of that confusion can be tied to common SSD based devices rely on NVM that are persistent memory retaining data when powered off (unlike the memory in your computer).

Instead of saying NVMe will mean the demise of flash, what should or could be said however some might be scared to say it is that other interfaces and protocols such as SAS (Serial Attached SCSI), AHCI/SATA, mSATA, Fibre Channel SCSI Protocol aka FCP aka simply Fibre Channel (FC), iSCSI and others are what can be replaced by NVMe. NVMe is simply the path or roadway along with traffic rules for getting from point a (such as a server) to point b (some storage device or medium e.g. flash SSD). The storage medium is where data is stored such as magnetic for Hard Disk Drive (HDD) or tape, nand flash, 3D XPoint, Optane among others.

NVMe and NVM including flash are better together

The simple quick get to the point is that NVMe (e.g. Non Volatile Memory aka NVM Express [NVMe]) is an interface protocol (like SAS/SATA/iSCSI among others) used for communicating with various nonvolatile memory (NVM) and solid state device (SSDs). NVMe is how data gets moved between a computer or other system and the NVM persistent memory such as nand flash, 3D XPoint, Spintorque or other storage class memories (SCM).

In other words, the only thing NVMe will, should, might or could kill off would be the use of some other interface such as SAS, SATA/AHCI, Fibre Channel, iSCSI along with propritary driver or protocols. On the other hand, given the extensibility of NVMe and how it can be used in different configurations including as part of fabrics, it is an enabler for various NVMs also known as persistent memories, SCMs, SSDs including those based on NAND flash as well as emerging 3D XPoint (or Intel version) among others.

Where To Learn More

View additional NVMe, SSD, NVM, SCM, Data Infrastructure and related topics via the following links.

- Use Intel Optane NVMe U.2 SFF 8639 SSD drive in PCIe slot

- NVMe overview and primer – Part I

- Part II – NVMe overview and primer (Different Configurations)

- Part III – NVMe overview and primer (Need for Performance Speed)

- Part IV – NVMe overview and primer (Where and How to use NVMe)

- Part V – NVMe overview and primer (Where to learn more, what this all means)

- PCIe Server I/O Fundamentals

- If NVMe is the answer, what are the questions?

- NVMe Wont Replace Flash By Itself

- Via Computerweekly – NVMe discussion: PCIe card vs U.2 and M.2

- Intel and Micron unveil new 3D XPoint Non Volatie Memory (NVM) for servers and storage

- Part II – Intel and Micron new 3D XPoint server and storage NVM

- Part III – 3D XPoint new server storage memory from Intel and Micron

- Server storage I/O benchmark tools, workload scripts and examples (Part I) and (Part II)

- Data Infrastructure Overview, Its Whats Inside of Data Centers

- Software Defined, Converged Infrastructure (CI), Hyper-Converged Infrastructure (HCI) resources

- The SSD Place (SSD, NVM, PM, SCM, Flash, NVMe, 3D XPoint, MRAM and related topics)

- The NVMe Place (NVMe related topics, trends, tools, technologies, tip resources)

- Data Protection Diaries (Archive, Backup/Restore, BC, BR, DR, HA, RAID/EC/LRC, Replication, Security)

- Software Defined Data Infrastructure Essentials (CRC Press 2017) including SDDC, Cloud, Container and more

- Various Data Infrastructure related events, webinars and other activities

Additional learning experiences along with common questions (and answers), as well as tips can be found in Software Defined Data Infrastructure Essentials book.

What This All Means

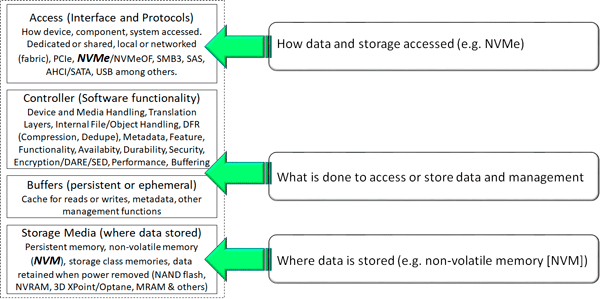

Context matters for example, NVM as the medium compared to NVMe as the interface and access protocols. With context in mind you can compare like or similar apples to apples such as nand flash, MRAM, NVRAM, 3D XPoint, Optane among other persistent memories also known as storage class memories, NVMs and SSDs. Likewise with context in mind NVMe can be compared to other interfaces and protocols such as SAS, SATA, PCIe, mSATA, Fibre Channel among others. The following puts all of this into context including various packaging options, interfaces and access protocols, functionality and media.

Putting IT all together

Will NVMe kill off flash? IMHO no not by itself, however NVMe combined with some other form of NVM, SCM, persistent memory as a storage medium may eventually combine as an alternative to NVMe and flash (or SAS/SATA and flash). However, for now at least for many applications, NVMe is in your future (along with flash among other storage mediums), the questions include when, where, why, how, with what among other questions (and answers). NVMe wont replace flash by itself (at least yet) as they complement each other.

Keep in mind, if NVMe is the answer, what are the questions.

Ok, nuff said, for now.

Gs

Greg Schulz – Microsoft MVP Cloud and Data Center Management, VMware vExpert 2010-2017 (vSAN and vCloud). Author of Software Defined Data Infrastructure Essentials (CRC Press), as well as Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio. Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO. All Rights Reserved. StorageIO is a registered Trade Mark (TM) of Server StorageIO.

Zombie Technology Life after Death Tape Is Still Alive

A Zombie Technology is one declared dead yet has Life after Death such as Tape which is still alive.

Image via StorageIO.com (licensed for use from Shutterstock.com)

Tapes Evolving Role

Sure we have heard for decade’s about the death of tape, and someday it will be dead and buried (I mean really dead), no longer used, buried, existing only in museums. Granted tape has been on the decline for some time, and even with many vendors exiting the marketplace, there remains continued development and demand within various data infrastructure environments, including software defined as well as legacy.

Tape remains viable for some environments as part of an overall memory data storage hierarchy including as a portability (transportable) as well as bulk storage medium.

Keep in mind that tapes role as a data storage medium also continues to change as does its location. The following table (via Software Defined Data Infrastructure Essentials (CRC Press)) Chapter 10 shows examples of various data movements from source to destination. These movements include migration, replication, clones, mirroring, and backup, copies, among others. The source device can be a block LUN, volume, partition, physical or virtual drive, HDD or SSD, as well as a file system, object, or blob container or bucket. An example of the modes in Table 10.1 include D2D backup from local to local (or remote) disk (HDD or SSD) storage or D2D2D copy from local to local storage, then to the remote.

Mode – Description

D2D – Data gets copied (moved, migrated, replicated, cloned, backed up) from source storage (HDD or SSD) to another device or disk (HDD or SSD)-based device

D2C – Data gets copied from a source device to a cloud device.

D2T – Data gets copied from a source device to a tape device (drive or library).

D2D2D – Data gets copied from a source device to another device, and then to another device.

D2D2T – Data gets copied from a source device to another device, then to tape.

D2D2C Data gets copied from a source device to another device, then to cloud.

Data Movement Modes from Source to Destination

Note that movement from source to the target can be a copy, rsync, backup, replicate, snapshot, clone, robocopy among many other actions. Also, note that in the earlier examples there are occurrences of tape existing in clouds (e.g. its place) and use changing. Tip – In the past, “disk” usually referred to HDD. Today, however, it can also mean SSD. Think of D2D as not being just HDD to HDD, as it can also be SSD to SSD, Flash to Flash (F2F), or S2S among many other variations if you prefer (or needed).

Image via Tapestorage.org

For those still interested in tape, check out the Active Archive Alliance recent posts (here), as well as the 2017 Tape Storage Council Memo and State of their industry report (here). While lower end-tape such as LTO (which is not exactly the low-end it was a decade or so ago) continues to evolve, things may not be as persistent for tape at the high-end. With Oracle (via its Sun/StorageTek acquisition) exiting the high-end (e.g. Mainframe focused) tape business, that leaves mainly IBM as a technology provider.

Image via Tapestorage.org

With a single tape device (e.g. drive) vendor at the high-end, that could be the signal for many organizations that it is time to finally either move from tape or at least to LTO (linear tape open) as a stepping stone (e.g. phased migration). The reason not being technical rather business in that many organizations need to have a secondary or competitive offering or go through an exception process.

On the other hand, just as many exited the IBM mainframe server market (e.g. Fujitsu/Amdahl, HDS, NEC), big blue (e.g. IBM) continues to innovate and drive both revenue and margin from those platforms (hardware, software, and services). This leads me to believe that IBM will do what it can to keep its high-end tape customers supported while also providing alternative options.

Where To Learn More

Learn more about related technology, trends, tools, techniques, and tips with the following links.

What This All Means

I would not schedule the last tape funeral just yet, granted there will continue to be periodic wakes and send off over the coming decade. Tape remains for some environments a viable data storage option when used in new ways, as well as new locations complementing flash SSD and other persistent memories aka storage class memories along with HDD.

Personally, I have been directly tape free for over 14 years. Granted, I have data in some clouds and object storage that may exist on a very cold data storage tier possibly maybe on tape that is transparent to my use. However just because I do not physically have tape, does not mean I do not see the need why others still have to or prefer to use it for different needs.

Also, keep in mind that tape continues to be used as an economic data transport for bulk movement of data for some environments. Meanwhile for those who only want, need or wish tape to finally go away, close your eyes, click your heels together and repeat your favorite tape is not alive chant three (or more) times. Keep in mind that HDDs are keeping tape alive by off loading some functions, while SSDs are keeping HDDs alive handling tasks formerly done by spinning media. Meanwhile, tape can and is still called upon by some organizations to protect or enable bulk recovery for SSD and HDDs even in cloud environments, granted in new different ways.

What this all means is that as a zombie technology having been declared dead for decades yet still live there is life after death for tape, which is still alive, for now.

Ok, nuff said (for now…).

Cheers

Gs

Greg Schulz – Multi-year Microsoft MVP Cloud and Data Center Management, VMware vExpert (and vSAN). Author of Software Defined Data Infrastructure Essentials (CRC Press), as well as Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio.

Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2023 Server StorageIO(R) and UnlimitedIO. All Rights Reserved.

Who Will Be At Top Of Storage World Next Decade?

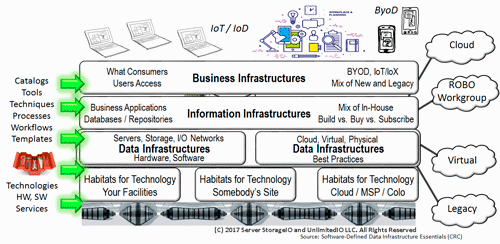

Data Storage regardless of if hardware, legacy, new, emerging, cloud service or various software defined storage (SDS) approaches are all fundamental resource components of data infrastructures along with compute server, I/O networking as well as management tools, techniques, processes and procedures.

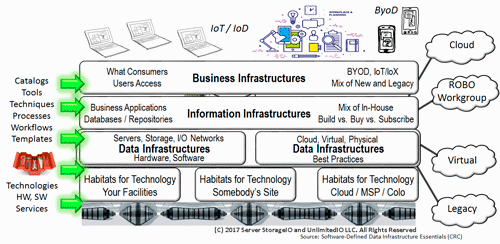

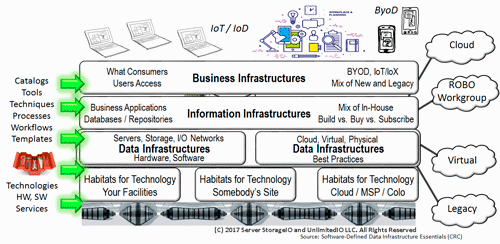

Fundamental Data Infrastructure resources

Data infrastructures include legacy along with software defined data infrastructures (SDDI), along with software defined data centers (SDDC), cloud and other environments to support expanding workloads more efficiently as well as effectively (e.g. boosting productivity).

Data Infrastructure and other IT Layers (stacks and altitude levels)

Various data infrastructures resource components spanning server, storage, I/O networks, tools along with hardware, software, services get defined as well as composed into solutions or services which may in turn be further aggregated into more extensive higher altitude offerings (e.g. further up the stack).

Various IT and Data Infrastructure Stack Layers (Altitude Levels)

Focus on Data Storage Present and Future Predictions

Drew Robb (@Robbdrew) has a good piece over at Enterprise Storage Forum looking at the past, present and future of who will rule the data storage world that includes several perspective predictions comments from myself as well as others. Some of the perspectives and predictions by others are more generic and technology trend and buzzword bingo focus which should not be a surprise. For example including the usual performance, Cloud and Object Storage, DPDK, RDMA/RoCE, Software-Defined, NVM/Flash/SSD, CI/HCI, NVMe among others.

Here are some excerpts from Drews piece along with my perspective and prediction comments of who may rule the data storage roost in a decade:

Amazon Web Services (AWS) – AWS includes cloud and object storage in the form of S3. However, there is more to storage than object and S3 with AWS also having Elastic File Services (EFS), Elastic Block Storage (EBS), database, message queue and on-instance storage, among others. for traditional, emerging and storage for the Internet of Things (IoT).

It is difficult to think of AWS not being a major player in a decade unless they totally screw up their execution in the future. Granted, some of their competitors might be working overtime putting pins and needles into Voodoo Dolls (perhaps bought via Amazon.com) while wishing for the demise of Amazon Web Services, just saying.

Voodoo Dolls and image via Amazon.com

Of course, Amazon and AWS could follow the likes of Sears (e.g. some may remember their catalog) and ignore the future ending up on the where are they now list. While talking about Amazon and AWS, one will have to wonder where Wall Mart will end up in a decade with or without a cloud of their own?

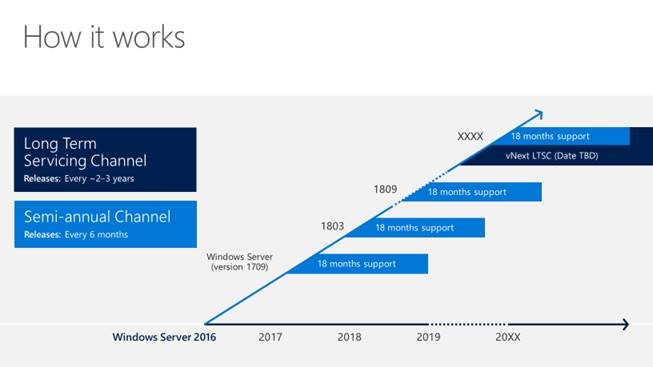

Microsoft – With Windows, Hyper-V and Azure (including Azure Stack), if there is any company in the industry outside of AWS or VMware that has quietly expanded its reach and positioning into storage, it is Microsoft, said Schulz.

Microsoft IMHO has many offerings and capabilities across different dimensions as well as playing fields. There is the installed base of Windows Servers (and desktops) that have the ability to leverage Software Defined Storage including Storage Spaces Direct (S2D), ReFS, cache and tiering among other features. In some ways I’m surprised by the number of people in the industry who are not aware of Microsoft’s capabilities from S2D and the ability to configure CI as well as HCI (Hyper Converged Infrastructure) deployments, or of Hyper-V abilities, Azure Stack to Azure among others. On the other hand, I run into Microsoft people who are not aware of the full portfolio offerings or are just focused on Azure. Needless to say, there is a lot in the Microsoft storage related portfolio as well as bigger broader data infrastructure offerings.

NetApp – Schulz thinks NetApp has the staying power to stay among the leading lights of data storage. Assuming it remains as a freestanding company and does not get acquired, he said, NetApp has the potential of expanding its portfolio with some new acquisitions. “NetApp can continue their transformation from a company with a strong focus on selling one or two products to learning how to sell the complete portfolio with diversity,” said Schulz.

NetApp has been around and survived up to now including via various acquisitions, some of which have had mixed results vs. others. However assuming NetApp can continue to reinvent themselves, focusing on selling the entire solution portfolio vs. focus on specific products, along with good execution and some more acquisitions, they have the potential for being a top player through the next decade.

Dell EMC – Dell EMC is another stalwart Schulz thinks will manage to stay on top. “Given their size and focus, Dell EMC should continue to grow, assuming execution goes well,” he said.

There are some who I hear are or have predicted the demise of Dell EMC, granted some of those predicted the demise of Dell and or EMC years ago as well. Top companies can and have faded away over time, and while it is possible Dell EMC could be added to the where are they now list in the future, my bet is that at least while Michael Dell is still involved, they will be a top player through the next decade, unless they mess up on execution.

Various Data Infrastructures and Resources involving Data Storage

Huawei – Huawei is one of the emerging giants from China that are steadily gobbling up market share. It is now a top provider in many categories of storage, and its rapid ascendancy is unlikely to stop anytime soon. “Keep an eye on Huawei, particularly outside of the U.S. where they are starting to hit their stride,” said Schulz.

In the US, you have to look or pay attention to see or hear what Huawei is doing involving data storage, however that is different in other parts of the world. For example, I see and hear more about them in Europe than in the US. Will Huawei do more in the US in the future? Good question, keep an eye on them.

VMware – A decade ago, Storage Networking World (SNW) was by far the biggest event in data storage. Everyone who was anyone attended this twice yearly event. And then suddenly, it lost its luster. A new forum known as VMworld had emerged and took precedence. That was just one of the indicators of the disruption caused by VMware. And Schulz expects the company to continue to be a major force in storage. “VMware will remain a dominant player, expanding its role with software-defined storage,” said Schulz.

VMware has a dominant role in data storage not just because of the relationship with Dell EMC, or because of VSAN which continues to gain in popularity, or the soon to be released VMware on AWS solution options among others. Sure all of those matters, however, keep in mind that VMware solutions also tie into and work with other legacies as well as software-defined storage solution, services as well as tools spanning block, file, object for virtual machines as well as containers.

"Someday soon, people are going to wake up like they did with VMware and AWS," said Schulz. "That’s when they will be asking ‘When did Microsoft get into storage like this in such a big way.’"

What the above means is that some environments may not be paying attention to what AWS, Microsoft, VMware among others are doing, perhaps discounting them as the old or existing while focusing on new, emerging what ever is trendy in the news this week. On the other hand, some environments may see the solution offerings from those mentioned as not relevant to their specific needs, or capable of scaling to their requirements.

Keep in mind that it was not that long ago, just a few years that VMware entered the market with what by today’s standard (e.g. VSAN and others) was a relatively small virtual storage appliance offering, not to mention many people discounted and ignored VMware as a practical storage solution provider. Things and technology change, not to mention there are different needs and solution requirements for various environments. While a solution may not be applicable today, give it some time, keep an eye on them to avoid being surprised asking the question, how and when did a particular vendor get into storage in such a big way.

Is Future Data Storage World All Cloud?

Perhaps someday everything involving data storage will be in or part of the cloud.

Does this mean everything is going to the cloud, or at least in the next ten years? IMHO the simple answer is no, even though I see more workloads, applications, and data residing in the cloud, there will also be an increase in hybrid deployments.

Note that those hybrids will span local and on-premises or on-site if you prefer, as well as across different clouds or service providers. Granted some environments are or will become all in on clouds, while others are or will become a hybrid or some variation. Also when it comes to clouds, do not be scared, be prepared. Also keep an eye on what is going on with containers, orchestration, management among other related areas involving persistent storage, a good example is Dell EMCcode RexRay among others.

Various data storage focus areas along with data infrastructures.

What About Other Vendors, Solutions or Services?

In addition to those mentioned above, there are plenty of other existing, new and emerging vendors, solutions, and services to keep an eye on, look into, test and conduct a proof of concept (PoC) trial as part of being an informed data infrastructure and data storage shopper (or seller).

Keep in mind that component suppliers some of whom like Cisco also provides turnkey solutions that are also part of other vendors offerings (e.g. Dell EMC VxBlock, NetApp FlexPod among others), Broadcom (which includes Avago/LSI, Brocade Fibre Channel, among others), Intel (servers, I/O adapters, memory and SSDs), Mellanox, Micron, Samsung, Seagate and many others.

E8, Excelero, Elastifile (software defined storage), Enmotus (micro-tiering, read Server StorageIOlab report here), Everspin (persistent and storage class memories including NVDIMM), Hedvig (software defined storage), NooBaa, Nutanix, Pivot3, Rozo (software defined storage), WekaIO (scale out elastic software defined storage, read Server StorageIO report here).

Some other software defined management tools, services, solutions and components I’m keeping an eye on, exploring, digging deeper into (or plan to) include Blue Medora, Datadog, Dell EMCcode and RexRay docker container storage volume management, Google, HPE, IBM Bluemix Cloud aka IBM Softlayer, Kubernetes, Mangstor, OpenStack, Oracle, Retrospect, Rubrix, Quest, Starwind, Solarwinds, Storpool, Turbonomic, Virtuozzo (software defined storage) among many others

What about those not mentioned? Good question, some of those I have mentioned in earlier Server StorageIO Update newsletters, as well as many others mentioned in my new book "Software Defined Data Infrastructure Essentials" (CRC Press). Then there are those that once I hear something interesting from on a regular basis will get more frequent mentions as well. Of course, there is also a list to be done someday that is basically where are they now, e.g. those that have disappeared, or never lived up to their full hype and marketing (or technology) promises, let’s leave that for another day.

Additional learning experiences along with common questions (and answers), as well as tips can be found in Software Defined Data Infrastructure Essentials book.

Where To Learn More

Learn more about related technology, trends, tools, techniques, and tips with the following links.

Data Infrastructures Resources (Servers, Storage, I/O Networks) enabling various services

What This All Means

It is safe to say that each new year will bring new trends, techniques, technologies, tools, features, functionality as well as solutions involving data storage as well as data infrastructures. This means a usual safe bet is to say that the current year is the most exciting and has the most new things than in the past when it comes to data infrastructures along with resources such as data storage. Keep in mind that there are many aspects to data infrastructures as well as storage all of which are evolving. Who Will Be At Top Of Storage World Next Decade? What say you?

Ok, nuff said (for now…).

Cheers

Gs

Greg Schulz – Multi-year Microsoft MVP Cloud and Data Center Management, VMware vExpert (and vSAN). Author Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio. Watch for the spring 2017 release of his new book "Software-Defined Data Infrastructure Essentials" (CRC Press).

Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2023 Server StorageIO(R) and UnlimitedIO. All Rights Reserved.

![]()

![]()