Volume 17, Issue 10 (October 2017)Hello and welcome to the October 2017 issue of the Server StorageIO data infrastructure update newsletter. October has been a busy month pertaining data infrastructure including server storage I/O related trends, activities, news, perspectives and related topics, so let’s have a look at them. | ||

In This Issue | ||

Enjoy this edition of the Server StorageIO data infrastructure update newsletter. Cheers GS | ||

Data Infrastructure and IT Industry Activity Trends | ||

Some recent Industry Activities, Trends, News and Announcements include: Startup Aparavi launched with a SaaS platform for managing long-term data retention. As part of a move to streamline the acquisition of Brocade by Broadcom (formerly known as Avago), the Brocade data center Ethernet networking business is being sold to Extreme networks. Datacore also updated their software defined storage solutions in October. Cisco announced new storage networking products and acquisition of Brodsoft (cloud calling and contact center solutions). As part of continued support for Fibre Channel based data infrastructure environments, Cisco has announced a 1U MDS 9132T 32 port 32 Gbps Fibre Channel Switch with FCP (SCSI Fibre Channel Protocol) now, and emerging FC-NVMe future support. Also announced are SAN telemetry activity monitoring, insight and event streaming for analysis in MDS 9700 32Gbps module. Cisco also announced interoperability for data center and data infrastructure insight, activity monitoring and telemetry with Virtual Instruments Virtual Wisdom technology eliminating the reliance on hardware based probes, along with Fibre Channel N-Port virtualization on Nexus 9300-FX DC switch. Commvault announced scale-out data protection with ScaleProtect for Cisco UCS platforms, along with their HyperScale appliance and HyperScale software. IBM had several October announcements include LTO 8 related, FlashSystem V9000 updates (e.g. All Flash Array) enclosure as well as hardware based compression, FlashSystem A9000 leveraging 3D TLC NAND flash (lower cost, higher capacity) among others. There is plenty of content (blogs, articles, podcasts, webinars, videos, white papers, presentations) on when to do containers, microservices and serverless compute including mesos, kubernetes and docker among others. What about when not to use those approaches or caveats to be aware of, here is such a piece (via Redhat) to have a look at. Granted if you are part of the micro services cheerleading bandwagon crowd you might not agree with the authors points, after all, everything is not the same in data centers and data infrastructures. Speaking of serverless, containers, here is a good post about Docker Swarm vs. Kubernetes management over at Upcloud. In Microsoft and Azure related activity, despite some early speculation in some venues that Storage Spaces Direct (S2D) was being discontinued as it was not part of Server release 1709, the reality is S2D is very much alive.

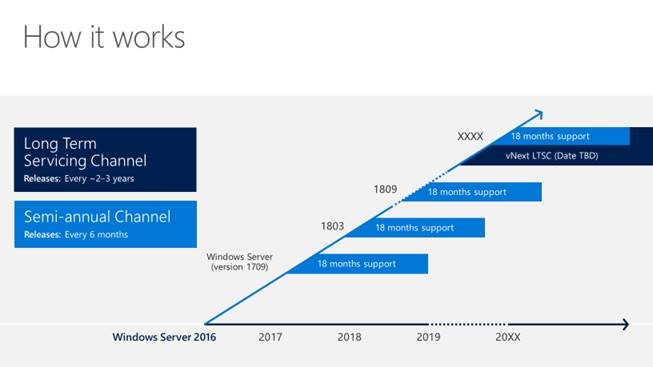

However some clarification is needed that might have lead to some initial speculation due to lack of understanding the new Microsoft release cycle. Microsoft has gone to Semi Annual Channel (SAC) releases that introduce new features in advance of the Long Term Support Channel (LTSC). LTSC are what you might be familiar with Windows and Windows Server releases that are updates spread out over time for a given major version (e.g. going from Server 2012 to Server 2012 R2 and so forth). The current Windows Server LTSC is the base introduced fall of 2016 along with incremental updates. By comparison, think of SAC as a branch channel for early adopters to get new features and with 1709 (e.g. September 2017), the focus is on containers. A mistake that has been made is to assume that a SAC release is actually a new major LTSC release, thus probably why some thought S2D was dead as it is not in SAC 1709. Indications from Microsoft are that there will be S2D enhancements in the next SAC, as well as future LTSC. For those interested in IoT, check out this Microsoft Azure IoT Hub and device twin document. Here is a post by Thomas Mauer looking at 10 hidden Hyper-V features to know about. In other activity, Minio announced experimental AWS S3 API support for Backblaze storage service. Software Defined Serverless Storage startup OpenIO gets $5M USD in additional funding. Quantum and other LTO Organization vendors have announced support for the new LTO version 8 tape drives and media. In addition to LTO 8, new roadmaps including out to LTO 12 are outlined here, and VMware vCloud Air is hosted by OVH. Western Digital Corporation (WDC) announced Microwave Assisted Magnetic Recording (MAMR) enabled Hard Disk Drives (HDD) that will enable future, larger capacity devices to be brought to market. Check out other industry news, comments, trends perspectives here. | ||

Server StorageIO Commentary in the news | ||

Recent Server StorageIO industry trends perspectives commentary in the news. Via HPE Insights: Comments on Public cloud versus on-prem storage View more Server, Storage and I/O trends and perspectives comments here | ||

Server StorageIOblog Posts | ||

Recent and popular Server StorageIOblog posts include:

In Case You Missed It #ICYMI

View other recent as well as past StorageIOblog posts here | ||

Server StorageIO Data Infrastructure Tips and Articles | ||

Recent Server StorageIO industry trends perspectives commentary in the news. Via EnterpriseStorageForum: Comments on Who Will Rule the Storage World? View more Server, Storage and I/O trends and perspectives comments here | ||

Server StorageIO Recommended Reading (Watching and Listening) List | ||

In addition to my own books including Software Defined Data Infrastructure Essentials (CRC Press 2017), the following are Server StorageIO recommended reading, watching and listening list items. The list includes various IT, Data Infrastructure and related topics. Intel Recommended Reading List (IRRL) for developers is a good resource to check out. Its October which means that it is also Blogtober, check out some of the blogs and posts occurring during October here. For those involved with VMware, check out Frank Denneman VMware vSphere 6.5 host resource guide-book here at Amazon.com. Docker: Up & Running: Shipping Reliable Containers in Production by Karl Matthias & Sean P. Kane via Amazon.com here. Essential Virtual SAN (VSAN): Administrator’s Guide to VMware Virtual SAN,2nd ed. by Cormac Hogan & Duncan Epping via Amazon.com here. Hadoop: The Definitive Guide: Storage and Analysis at Internet Scale by Tom White via Amazon.com here. Cisco IOS Cookbook: Field tested solutions to Cisco Router Problems by Kevin Dooley and Ian Brown Via Amazon.com here. Watch for more items to be added to the recommended reading list book shelf soon. | ||

Events and Activities | ||

Recent and upcoming event activities. Nov. 9, 2017 – Webinar – All You Need To Know about ROBO Data Protection Backup See more webinars and activities on the Server StorageIO Events page here. | ||

Server StorageIO Industry Resources and Links | ||

Useful links and pages: |

Ok, nuff said, for now.

Gs

Greg Schulz – Microsoft MVP Cloud and Data Center Management, VMware vExpert 2010-2017 (vSAN and vCloud). Author of Software Defined Data Infrastructure Essentials (CRC Press), as well as Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio. Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2023 Server StorageIO(R) and UnlimitedIO. All Rights Reserved.