Part 2 – Which HDD for Content Applications – HDD Testing

![]()

Updated 1/23/2018

Which enterprise HDD to use with a content server, hdd testing, how and what to do

Insight for effective server storage I/O decision making

Server StorageIO Lab Review

![]()

This is the second in a multi-part series (read part one here) based on a white paper hands-on lab report I did compliments of Servers Direct and Seagate that you can read in PDF form here. The focus is looking at the Servers Direct (www.serversdirect.com) converged Content Solution platforms with Seagate Enterprise Hard Disk Drive (HDD’s). In this post we look at some decisions and configuration choices to make for testing content applications servers as well as project planning.

Content Solution Test Objectives

In short period, collect performance and another server, storage I/O decision-making information on various HDD’s running different content workloads.

Working with the Servers Direct staff a suitable content solution platform test configuration was created. In addition to providing two Intel-based content servers, Servers Direct worked with their partner Seagate to arrange for various enterprise-class HDD’s to be evaluated. For these series of content application tests, being short on time, I chose to do run some simple workloads including database, basic file (large and small) processing and general performance characterization.

Content Solution Decision Making

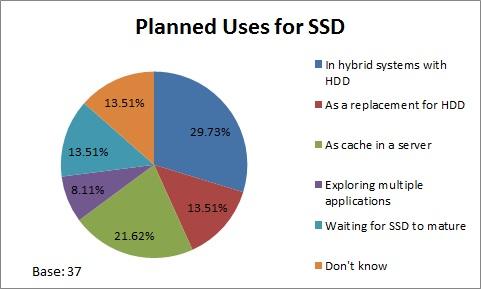

Knowing how Non-Volatile Memory (NVM) NAND flash SSD (1) devices (drives and PCIe cards) perform, what would be the best HDD based storage option for my given set of applications? Different applications have various performance, capacity and budget considerations. Different types of Seagate Enterprise class 2.5” Small Form Factor (SFF) HDD’s were tested.

While revolutions per minute (RPM) still plays a role in HDD performance, there are other factors including internal processing capabilities, software or firmware algorithm optimization, and caching. Most HDD’s today have some amount of DRAM for read caching and other operations. Seagate Enterprise Performance HDD’s with the enhanced caching feature (2) are examples of devices accelerate storage I/O speed vs. traditional 10K and 15K RPM drives.

Project Planning And Preparation

Workload to be tested included:

- Database read/writes

- Large file processing

- Small file processing

- General I/O profile

Project testing consisted of five phases, some of which overlapped with others:

Phase 1 – Plan

Identify candidate workloads that could be run in the given amount of time, determine time schedules and resource availability, create a project plan.

Phase 2 – Define

Hardware define and software define the test platform.

Phase 3 – Setup

The objective was to assess plug-play capability of the server, storage and I/O networking hardware with a Linux OS before moving on to the reported workloads in the next phase. Initial setup and configuration of hardware and software, installation of additional devices along with software configuration, troubleshooting, and learning as applicable. This phase consisted of using Ubuntu Linux 14.04 server as the operating system (OS) along with MySQL 5.6 as a database server during initial hands-on experience.

Phase 4 – Execute

This consisted of using Windows 2012 R2 server as the OS along with Microsoft SQL Server on the system under test (SUT) to support various workloads. Results of this phase are reported below.

Phase 5 – Analyze

Results from the workloads run in phase 3 were analyzed and summarized into this document.

(Note 1) Refer to Seagate 1200 12 Gbps Enterprise SAS SSD StorageIO lab review

(Note 2) Refer to Enterprise SSHD and Flash SSD Part of an Enterprise Tiered Storage Strategy

Planning And Preparing The Tests

As with most any project there were constraints to contend with and work around.

Test constraints included:

- Short-time window

- Hardware availability

- Amount of hardware

- Software availability

Three most important constraints and considerations for this project were:

- Time – This was a project with a very short time “runway”, something common in most customer environments who are looking to make a knowledgeable server, storage I/O decisions.

- Amount of hardware – Limited amount of DRAM main memory, sixteen 2.5” internal hot-swap storage slots for HDD’s as well as SSDs. Note that for a production content solution platform; additional DRAM can easily be added, along with extra external storage enclosures to scale memory and storage capacity to fit your needs.

- Software availability – Utilize common software and management tools publicly available so anybody could leverage those in their own environment and tests.

The following content application workloads were profiled:

- Database reads/writes – Updates, inserts, read queries for a content environment

- Large file processing – Streaming of large video, images or other content objects.

- Small file processing – Processing of many small files found in some content applications

- General I/O profile – IOP, bandwidth and response time relevant to content applications

Where To Learn More

- Part 1 of this series – Trends and Content Applications Servers

- Part 2 of this series – Content applications server decisions and testing plans

- Part 3 of this series – Test hardware and software configuration

- Part 4 of this series – Large file I/O processing

- Part 5 of this series – Small file I/O processing

- Part 6 of this series – General I/O processing

- Part 7 of this series – How HDD continue to evolve over different generations and wrap up

- As the platters spin, HDD’s for cloud, virtual and traditional storage environments

- How many IOPS can a HDD, HHDD or SSD do?

- Hard Disk Drives (HDD) for Virtual Environments

- Server and Storage I/O performance and benchmarking tools

- Additional Server StorageIO White Papers and Lab Reports, Solutions Briefs and Profiles, Tips and Articles

- PDF White Paper version of this post

- www.thenvmeplace.com and www.thessdplace.com

Additional learning experiences along with common questions (and answers), as well as tips can be found in Software Defined Data Infrastructure Essentials book.

What This All Means

There are many different types of content applications ranging from little data databases to big data analytics as well as very big fast data such as for video. Likewise there are various workloads and characteristics to test. The best test and metrics are those that apply to your environment and application needs.

Continue reading part three of this multi-part series here looking at how the systems and HDD’s were configured and tested.

Ok, nuff said, for now.

Gs

Greg Schulz – Microsoft MVP Cloud and Data Center Management, VMware vExpert 2010-2017 (vSAN and vCloud). Author of Software Defined Data Infrastructure Essentials (CRC Press), as well as Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press), Resilient Storage Networks (Elsevier) and twitter @storageio. Courteous comments are welcome for consideration. First published on https://storageioblog.com any reproduction in whole, in part, with changes to content, without source attribution under title or without permission is forbidden.

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO. All Rights Reserved. StorageIO is a registered Trade Mark (TM) of Server StorageIO.