Collecting Transaction Per Minute from SQL Server and HammerDB

When using benchmark or workload generation tools such as HammerDB I needed a way to capture and log performance activity metrics such as transactions per minute. For example using HammerDB to simulate an application making database requests performing various transactions as part of testing an overall system solution including server and storage I/O activity. This post takes a look at the problem or challenge I was looking to address, as well as creating a solution after spending time searching for one (still searching btw).

The Problem, Issue, Challenge, Opportunity and Need

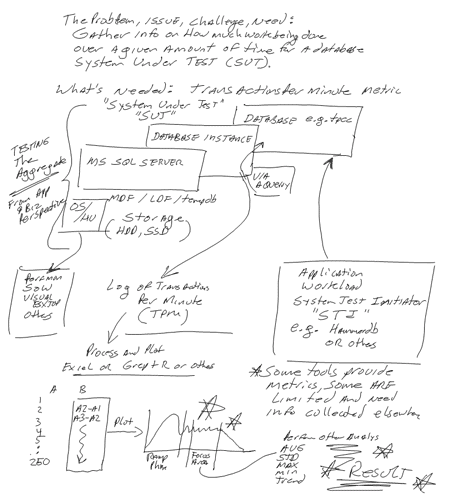

The challenge is to collect application performance such as transactions per minute from a workload using a database. The workload or benchmark tool (in this case HammerDB) is the System Test Initiator (STI) that drives the activity (e.g. database requests) to a System Under Test (SUT). In this example the SUT is a Microsoft SQL Server running on a Windows 2012 R2 server. What I need is to collect and log into a file for later analysis the transaction rate per minute while the STI is generating a particular workload.

Understanding the challenge and designing a strategy

If you have ever used benchmark or workload generation tools such as Quest Benchmark Factory (part of the Toad tools collection) you might be spoiled with how it can be used to not only generate the workload, as well as collect, process, present and even store the results for database workloads such as TPC simulations. In this situation, Transaction Processing Council (TPC) like workloads need to be run and metrics on performance collected. Lets leave Benchmark Factory for a future discussion and focus instead on a free tool called HammerDB and more specifically how to collection transactions per minute metrics from Microsoft SQL Server. While the focus is SQL Server, you can easily adapt the approach for MySQL among others, not to mention there are tools such as Sysbench, Aerospike among other tools.

The following image (created using my Livescribe Echo digital pen) outlines the problem, as well as sketches out a possible solution design. In the following figure, for my solution I’m going to show how to grab every minute for a given amount of time the count of transactions that have occurred. Later in the post processing (you could also do in the SQL Script) I take the new transaction count (which is cumulative) and subtract the earlier interval which yields the transactions per minute (see examples later in this post).

The problem and challenge, a way to collect Transactions Per Minute (TPM)

Finding a solution

HammerDB displays results via its GUI, and perhaps there is a way or some trick to get it to log results to a file or some other means, however after searching the web, found that it was quicker to come up with solution. That solution was to decide how to collect and report the transactions per minute (or you could do by second or other interval) from Microsoft SQL Server. The solution was to find what performance counters and metrics are available from SQL Server, how to collect those and log them to a file for processing. What this means is a SQL Server script file would need to be created that ran in a loop collecting for a given amount of time at a specified interval. For example once a minute for several hours.

Taking action

The following is a script that I came up with that is far from optimal however it gets the job done and is a starting point for adding more capabilities or optimizations.

In the following example, set loopcount to some number of minutes to collect samples for. Note however that if you are running a workload test for eight (8) hours with a 30 minute ramp-up time, you would want to use a loopcount (e.g. number of minutes to collect for) of 480 + 30 + 10. The extra 10 minutes is to allow for some samples before the ramp and start of workload, as well as to give a pronounced end of test number of samples. Add or subtract however many minutes to collect for as needed, however keep this in mind, better to collect a few extra minutes vs. not have them and wished you did.

-- Note and disclaimer:

--

-- Use of this code sample is at your own risk with Server StorageIO and UnlimitedIO LLC

-- assuming no responsibility for its use or consequences. You are free to use this as is

-- for non-commercial scenarios with no warranty implied. However feel free to enhance and

-- share those enhancements with others e.g. pay it forward.

--

DECLARE @cntr_value bigint;

DECLARE @loopcount bigint; # how many minutes to take samples for

set @loopcount = 240

SELECT @cntr_value = cntr_value

FROM sys.dm_os_performance_counters

WHERE counter_name = 'transactions/sec'

AND object_name = 'MSSQL$DBIO:Databases'

AND instance_name = 'tpcc' ; print @cntr_value;

WAITFOR DELAY '00:00:01'

--

-- Start loop to collect TPM every minute

--

while @loopcount <> 0

begin

SELECT @cntr_value = cntr_value

FROM sys.dm_os_performance_counters

WHERE counter_name = 'transactions/sec'

AND object_name = 'MSSQL$DBIO:Databases'

AND instance_name = 'tpcc' ; print @cntr_value;

WAITFOR DELAY '00:01:00'

set @loopcount = @loopcount - 1

end

--

-- All done with loop, write out the last value

--

SELECT @cntr_value = cntr_value

FROM sys.dm_os_performance_counters

WHERE counter_name = 'transactions/sec'

AND object_name = 'MSSQL$DBIO:Databases'

AND instance_name = 'tpcc' ; print @cntr_value;

--

-- End of script

--

The above example has loopcount set to 240 for a 200 minute test with a 30 minute ramp and 10 extra minutes of samples. I use the a couple of the minutes to make sure that the system test initiator (STI) such as HammerDB is configured and ready to start executing transactions. You could also put this along with your HammerDB items into a script file for further automation, however I will leave that exercise up to you.

For those of you familiar with SQL and SQL Server you probably already see some things to improve or stylized or simply apply your own preference which is great, go for it. Also note that I’m only selecting a certain variable from the performance counters as there are many others which you can easily discovery with a couple of SQL commands (e.g. select and specify database instance and object name. Also note that the key is accessing the items in sys.dm_os_performance_counters of your SQL Server database instance.

The results

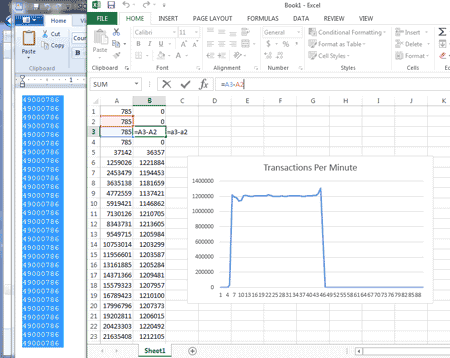

The output from the above is a list of cumulative numbers as shown below which you will need to post process (or add a calculation to the above script). Note that part of running the script is specifying an output file which I show later.

785

785

785

785

37142

1259026

2453479

3635138

Implementing the solution

You can setup the above script to run as part of a larger automation shell or batch script, however for simplicity I’m showing it here using Microsoft SQL Server Studio.

Microsoft SQL Server Studio with script to collect Transaction Per Minute (TPM)

The following image shows how to specify an output file for the results to be logged to when using Microsoft SQL Studio to run the TPM collection script.

Microsoft SQL Server Studio specify output file

With the SQL Server script running to collect results, and HammerDB workload running to generate activity, the following shows Quest Spotlight on Windows (SoW) displaying WIndows Server 2012 R2 operating system level performance including CPU, memory, paging and other activity. Note that this example had about the system test initiator (STI) which is HammerDB and the system under test (SUT) that is Microsoft SQL Server on the same server.

Quest Spotlight on Windows showing Windows Server performance activity

Results and post-processing

As part of post processing simple use your favorite tool or script or what I often do is pull the numbers into Excel spreadsheet, and simply create a new column of numbers that computes and shows the difference between each step (see below). While in Excel then I plot the numbers as needed which can also be done via a shell script and other plotting tools such as R.

In the following example, the results are imported into Excel (your favorite tool or script) where I then add a column (B) that simple computes the difference between the existing and earlier counter. For example in cell B2 = A2-A1, B3 = A3-A2 and so forth for the rest of the numbers in column A. I then plot the numbers in column B to show the transaction rates over time that can then be used for various things.

Results processed in Excel and plotted

Note that in the above results that might seem too good to be true they are, these were cached results to show the tools and data collection process as opposed to the real work being done, at least for now…

Where to learn more

Here are some extra links to have a look at:

How to test your HDD, SSD or all flash array (AFA) storage fundamentals

Server and Storage I/O Benchmarking 101 for Smarties

Server and Storage I/O Benchmark Tools: Microsoft Diskspd (Part I)

The SSD Place (collection of flash and SSD resources)

Server and Storage I/O Benchmarking and Performance Resources

I/O, I/O how well do you know about good or bad server and storage I/Os?

What this all means and wrap-up

There are probably many ways to fine tune and optimize the above script, likewise there may even be some existing tool, plug-in, add-on module, or configuration setting that allows HammerDB to log the transaction activity rates to a file vs. simply showing on a screen. However for now, this is a work around that I have found for when needing to collect transaction activity performance data with HammerDB and SQL Server.

Ok, nuff said, for now…

Cheers gs

Greg Schulz – Author Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press) and Resilient Storage Networks (Elsevier)

twitter @storageio

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO LLC All Rights Reserved