Server Storage I/O Cables Connectors Chargers & other Geek Gifts

This is part one of a two part series for what to get a geek for a gift, read part two here.

It is that time of the year when annual predictions are made for the upcoming year, including those that will be repeated next year or that were also made last year.

It’s also the time of the year to get various projects wrapped up, line up new activities, get the book-keeping things ready for year-end processing and taxes, as well as other things.

It’s also that time of the year to do some budget and project planning including upgrades, replacements, enhancements while balancing an over-subscribed holiday party schedule some of you may have.

Lets not forget getting ready for vacations, perhaps time off from work with some time upgrading your home lab or other projects.

Then there are the gift lists or trying to figure out what to get that difficult to shop for person particular geek’s who may have everything, or want the latest and greatest that others have, or something their peers don’t have yet.

Sure I have a DJI Phantom II on my wish list, however also have other things on my needs list (e.g. what I really need and want vs. what would be fun to wish for).

Image via DJI.com, click on image to learn more and compare models

So here are some things for the geek or may have everything or is up on having the latest and greatest, yet forgot or didn’t know about some of these things.

Not to mention some of these might seem really simple and low-cost, think of them like a Lego block or erector set part where your imagination will be your boundary how to use them. Also, most if not all of these are budget friendly particular if you shop around.

Replace a CD/DVD with 4 x 2.5″ HDD’s or SSD’s

So you need to add some 2.5" SAS or SATA HDD’s, SSD’s, HHDD’s/SSHD’s to your server for supporting your VMware ESXi, Microsoft Hyper-V, KVM, Xen, OpenStack, Hadoop or legacy *nix or Windows environment or perhaps gaming system. Challenge is that you are out of disk drive bay slots and you want things neatly organized vs. a rat’s nest of cables hanging out of your system. No worries assuming your server has an empty media bay (e.g. those 5.25" slots where CDs/DVDs or really old HDD’s go), or if you can give up the CD/DVD, then use that bay and its power connector to add ones of these. This is a 4 x 2.5" SAS and SATA drive bay that has a common power connector (molex male) with each drive bay having its own SATA drive connection. By each drive having its own SATA connection you can map the drives to an on-board available SATA port attached to a SAS or SATA controller, or attach an available port on a RAID adapter to the ports using a cable such as small form factor (SFF) 8087 to SATA.

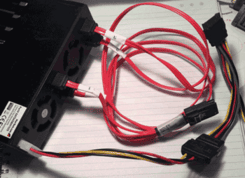

(Left) Rear view with Molex power and SATA cables (Right) front view

I have a few of these in different systems and what I like about them is that they support different drive speeds, plus they will accept a SAS drive where many enclosures in this category only support SATA. Once you mount your 2.5" HDD or SSD using screws, you can hot swap (requires controller and OS support) the drives and move them between other similar enclosures as needed. The other thing I like is that there are front indicator lights as well as by each drive having its own separate connection, you can attach some of the drives to a RAID adapter while others connected to on-board SATA ports. Oh, and you can also have different speeds of drives as well.

Power connections

Depending on the type of your server, you may have Molex, SATA or some other type of power connections. You can use different power connection cables to go from one type (Molex) to another, create a connection for two devices, create an extension to reach hard to get to mounting locations.

Warning and disclosure note, keep in mind how much power you are drawing when attaching devices to not cause an electrical or fire hazard, follow manufactures instructions and specification doing so at your own risk! After all, Just like Clark Grizzwald in National Lampoon Christmas Vacation who found you could attach extension cord to splitters to splitters and fan-out to have many lights attached, you don’t want to cause a fire or blackout when you plug to many drives in.

National Lampoon Christmas Vacation

Measuring Power

Ok so you do not want to do a Clark Grizzwald (see above video) and overload a power circuit, or perhaps you simply want to know how many watts, amps or quality of your voltage is.

There are many types of power meters along with various prices, some even have interfaces where you can grab event data to correlate with server storage I/O networking performance to do things such as IOP’s per watt among other metrics. Speaking of IOP’s per watt, check out the SNIA Emerald site where they have some good tools including a benchmark script that uses Vdbench to drive hot band workload (e.g. basically kick the crap out of a storage system).

Back to power meters, I like the Kill A Watt series of meters as they give good info about amps, volts, power quality. I have these plugged into outlets so I can see how much power is being used by the battery backup units (BBU) aka UPS that also serve as power surge filters. If needed I can move these further downstream to watch the power intake of a specific server, storage, network or other device.

Standby and backup power

Electrical power surge strips should be a given or considered common sense, however what is or should be common sense should be repeated so that it remains common sense, you should be using power surge strips or other devices.

Standby, UPS and BBU

For most situations a good surge suppressor will cover short power transients.

Image via APC and model similar to those that I have

For slightly longer power outages of a few seconds to minutes, that’s where battery backup up (BBU) units that also have surge suppression comes into play. There are many types, sizes with various features to meet your needs and budget. I have several of theses in a couple of different sizes not only for servers, storage and networking equipment (including some WiFi access points, routers, etc), I also have them for home things such as satellite DVR’s. However not everything needs to stay on while others simply need to stay on long-enough in order to shutdown manually or via automated power off sequences.

Alternate Power Generation

Generators are not just for the rich and famous or large data center, like other technologies they are available in different sizes, power capacity, fuel sources, manual or automated among other things.

Image via Kohler Power similar to model that I have

Note that even with a typical generator there will be a time gap from the time power goes off until the generator starts, stabilizes and you have good power. That’s where the BBU and UPS mentioned above comes into play to bridge those time gaps which in my cases is about 25-30 seconds. Btw, knowing how much power your technology is drawing using tools such as the Kill A Watt is part of the planning process to avoid surprises.

What about Solar Power

Yup, whether it is to fit in and be green, or simply to get some electrical power when or where it is not needed to charge a battery or power some device, these small solar power devices are very handy.

Image via Amazon.com

Image via Amazon.com

For example you can get or easily make an adapter to charge laptops, cell phones or even power them for normal use (check manufactures information on power usage, Amps and Voltage draws among other warnings to prevent fire and other things). Btw, not only are these handy for computer related things, they also work great for keeping batteries on my fishing boat charged so that I have my fish finder and other electronics, just saying.

Fire suppression

How about a new or updated smoke and fire detection alarm monitor, as well as fire extinguisher for the geek’s software defined hardware that runs on power (electrical or battery)?

The following is from the site Fire Extinguisher 101 where you can learn more about different types of suppression technologies.

| Image via Fire Extinguisher 101 | - Class A extinguishers are for ordinary combustible materials such as paper, wood, cardboard, and most plastics. The numerical rating on these types of extinguishers indicates the amount of water it holds and the amount of fire it can extinguish. Geometric symbol (green triangle)

- Class B fires involve flammable or combustible liquids such as gasoline, kerosene, grease and oil. The numerical rating for class B extinguishers indicates the approximate number of square feet of fire it can extinguish. Geometric symbol (red square)

- Class C fires involve electrical equipment, such as appliances, wiring, circuit breakers and outlets. Never use water to extinguish class C fires – the risk of electrical shock is far too great! Class C extinguishers do not have a numerical rating. The C classification means the extinguishing agent is non-conductive. Geometric symbol (blue circle)

- Class D fire extinguishers are commonly found in a chemical laboratory. They are for fires that involve combustible metals, such as magnesium, titanium, potassium and sodium. These types of extinguishers also have no numerical rating, nor are they given a multi-purpose rating – they are designed for class D fires only. Geometric symbol (Yellow Decagon)

- Class K fire extinguishers are for fires that involve cooking oils, trans-fats, or fats in cooking appliances and are typically found in restaurant and cafeteria kitchens. Geometric symbol (black hexagon)

|

Wrap up for part I

This wraps up part I of what to get a geek V2014, continue reading part II here.

Ok, nuff said, for now…

Cheers gs

Greg Schulz – Author Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press) and Resilient Storage Networks (Elsevier)

twitter @storageio

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO LLC All Rights Reserved

![]()