XtremIO flash SSD more than storage I/O speed

Following up part I of this two-part series, here are more more details, insights and perspectives about EMC XtremIO and it’s generally availability that were announced today.

XtremIO the basics

- All flash Solid State Device (SSD) based solution

- Cluster of up to four X-Brick nodes today

- X-Bricks available in 10TB increments today, 20TB in January 2014

- 25 eMLC SSD drives per X-Brick with redundant dual processor controllers

- Provides server-side iSCSI and Fibre Channel block attachment

- Integrated data footprint reduction (DFR) including global dedupe and thin provisioning

- Designed for extending duty cycle, minimizing wear of SSD

- Removes need for dedicated hot spare drives

- Capable of sustained performance and availability with multiple drive failure

- Only unique data blocks are saved, others tracked via in-memory meta data pointers

- Reduces overhead of data protection vs. traditional small RAID 5 or RAID 6 configurations

- Eliminates overhead of back-end functions performance impact on applications

- Deterministic storage I/O performance (IOPs, latency, bandwidth) over life of system

When would you use XtremIO vs. another storage system?

If you need all enterprise like data services including thin provisioning, dedupe, resiliency with deterministic performance on an all-flash system with raw capacity from 10-40TB (today) then XtremIO could be a good fit. On the other hand, if you need a mix of SSD based storage I/O performance (IOPS, latency or bandwidth) along with some HDD based space capacity, then a hybrid or traditional storage system could be the solution. Then there are hybrid scenarios where a hybrid storage system, array or appliance (mix of SSD and HDD) are used for most of the applications and data, with an XtremIO handling more tasks that are demanding.

How does XtremIO compare to others?

EMC with XtremIO is taking a different approach than some of their competitors whose model is to compare their faster flash-based solutions vs. traditional mid-market and enterprise arrays, appliances or storage systems on a storage I/O IOP performance basis. With XtremIO there is improved performance measured in IOPs or database transactions among other metrics that matter. However there is also an emphasis on consistent, predictable, quality of service (QoS) or what is known as deterministic storage I/O performance basis. This means both higher IOPs with lower latency while doing normal workload along with background data services (snapshots, data footprint reduction, etc).

Some of the competitors focus on how many IOPs or work they can do, however without context or showing impact to applications when back-ground tasks or other data services are in use. Other differences include how cluster nodes are interconnected (for scale out solutions) such as use of Ethernet and IP-based networks vs dedicated InfiniBand or PCIe fabrics. Host server attachment will also differ as some are only iSCSI or Fibre Channel block, or NAS file, or give a mix of different protocols and interfaces.

An industry trend however is to expand beyond the flash SSD need for speed focus by adding context along with QoS, deterministic behavior and addition of data services including snapshots, local and remote replication, multi-tenancy, metering and metrics, security among other items.

Who or what are XtremIO competition?

To some degree vendors who only have PCIe flash SSD cards might place themselves as the alternative to all SSD or hybrid mixed SSD and HDD based solutions. FusionIO used to take that approach until they acquired NexGen (a storage system) and now have taken a broader more solution balanced approach of use the applicable tool for the task or application at hand.

Other competitors include the all SSD based storage arrays, systems or appliance vendors which includes legacy existing as well as startups vendors that include among others IBM who bought TMS (flashsystems), NetApp (EF540), Solidfire, Pure, Violin (who did a recent IPO) and Whiptail (bought by Cisco). Then there are the hybrid which is a long list including Cloudbyte (software), Dell, EMCs other products, HDS, HP, IBM, NetApp, Nexenta (Software), Nimble, Nutanix, Oracle, Simplivity and Tintri among others.

What’s new with this XtremIO announcement

10TB X-Bricks enable 10 to 40TB (physical space capacity) per cluster (available on 11/19/13). 20TB X-Bricks (larger capacity drives) will double the space capacity in January 2014. If you are doing the math, that means either a single brick (dual controller) system, or up to four bricks (nodes, each with dual controllers) configurations. Common across all system configurations are data features such as thin provisioning, inline data footprint reduction (e.g. dedupe) and XtremIO Data Protection (XDP).

What does XtremIO look like?

XtremIO consists of up to four nodes (today) based on what EMC calls X-Bricks.

25 SSD drive X-Brick

Each 4U X-Brick has 25 eMLC SSD drives in a standard EMC 2U DAE (disk enclosure) like those used with the VNX and VMAX for SSD and Hard Disk Drives (HDD). In addition to the 2U drive shelve, there are a pair of 1U storage processors (e.g. controllers) that give redundancy and shared access to the storage shelve.

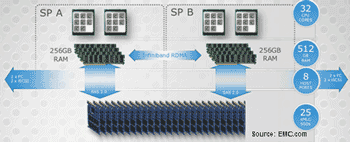

XtremIO X-Brick block diagram

XtremIO storage processors (controllers) and drive shelve block diagram. Each X-Brick and their storage processors or controllers communicate with each other and other X-Bricks via a dedicated InfiniBand using Remote Direct Memory Access (RDMA) fabric for memory to memory data transfers. The controllers or storage processors (two per X-Brick) each have dual processors with eight cores for compute, along with 256GB of DRAM memory. Part of each controllers DRAM memory is set aside as a mirror its partner or peer and vise versa with access being over the InfiniBand fabric.

XtremIO X-Brick four node fabric cluster or instance

How XtremIO works

Servers access XtremIO X-Bricks using iSCSI and Fibre Channel for block access. A responding X-Brick node handles the storage I/O request and in the case of a write updates other nodes. In the case of a write, the handling node or controller (aka storage processor) checks its meta data map in memory to see if the data is new and unique. If so, the data gets saved to SSD along with meta data information updated across all nodes. Note that data gets ingested and chunked or sharded into 4KB blocks. So for example if a 32KB storage I/O request from the server arrives, that is broken (e.g. chunk or shard) into 8 4KB pieces each with a mathematical unique fingerprint created. This fingerprint is compared to what is known in the in memory meta data tables (this is a hexadecimal number compare so a quick operation). Based on the comparisons if unique the data is saved and pointers created, if already exists, then pointers are updated.

In addition to determining if unique data, the fingerprint is also used for generate a balanced data dispersal plan across the nodes and SSD devices. Thus there is the benefit of reducing duplicate data during ingestion, while also reducing back-end IOs within the XtremIO storage system. Another byproduct is the reduction in time spent on garbage collection or other background tasks commonly associated with SSD and other storage systems.

Meta data is kept in memory with a persistent copied written to reserved area on the flash SSD drives (think of as a vault area) to support and keep system state and consistency. In between data consistency points the meta data is kept in a log journal like how a database handles log writes. What’s different from a typical database is that XtremIO XIOS platform software does these consistency point writes for persistence on a granularity of seconds vs. hours or minutes.

What about rumor that XtremIO can only do 4KB IOPs?

Does this mean that the smallest storage I/O or IOP that XtremIO can do is 4GB?

That is a rumor or some fud I have heard floated by a competitor (or two or three) that assumes if only 4KB internal chunk or shard being used for processing, that must mean no IOPs smaller than 4KB from a server.

XtremIO can do storage I/O IOP sizes of 512 bytes (e.g. the standard block size) as do other systems. Note that the standard server storage I/O block or IO size is 512 bytes or multiples of that unless the new 4KB advanced format (AF) block size being used which based on my conversations with EMC, AF is not supported, yet. (Updated 11/15/13 EMC has indicated that host (front-end) 4K AF support, along with 512 byte emulation modes are available now with XIOS). Also keep in mind that since XtremIO XIOS internally is working with 4KB chunks or shards, that is a stepping stone for being able to eventually leverage back-end AF drive support in the future should EMC decide to do so (Updated 11/15/13 Waiting for confirmation from EMC about if back-end AF support is now enabled or not, will give more clarity as it is recieved).

What else is EMC doing with XtremIO?

- VCE Vblock XtremIO systems for SAP HANA (and other databases) in memory databases along with VDI optimized solutions.

- VPLEX and XtremIO for extended distance local, metro and wide area HA, BC and DR.

- EMC PowerPath XtremIO storage I/O path optimization and resiliency.

- Secure Remote Support (aka phone home) and auto support integration.

Boosting your available software license minutes (ASLM) with SSD

Another use of SSD has been in the past the opportunity to make better use of servers stretching their usefulness or delaying purchase of new ones by improving their effective use to do more work. In the past this technique of using SSDs to delay a server or CPU upgrade was used when systems when hardware was more expensive, or during the dot com bubble to fill surge demand gaps. This has the added benefit of stretching database and other expensive software licenses to go further or do more work. The less time servers spend waiting for IOP’s means more time for doing useful work and bringing value of the software license. Otoh, the more time spent waiting is lot available software minutes which is cost overhead.

Think of available software licence minutes (ASLM) in terms of available software license minutes where if doing useful work your software is providing value. On the other hand if those minutes are not used for useful work (e.g. spent waiting or lost due to CPU or server or IO wait, then they are lost). This is like airlines and available seat miles (ASM) metric where if left empty it’s a lost opportunity, however if used, then value, not to mention if yield management applied to price that seat differently. To make up for that loss many organizations have to add extra servers and thus more software licensing costs.

Can we get a side of context with them metrics?

EMC along with some other vendors are starting to give more context with their storage I/O performance metrics that matter than simple IOP’s or Hero Marketing Metrics. However context extends beyond performance to also availability and space capacity which means data protection overhead. As an example, EMC claims 25% for RAID 5 and 20% for RAID 6 or 30% for RAID 5/RAID 6 combo where a 25 drive (SSD) XDP has a 8% overhead. However this assumes a 4+1 (5 drive) RAID , not apples to apples comparison on a space overhead basis. For example a 25 drive RAID 5 (24+1) would have around an 4% parity protection space overhead or a RAID 6 (23+2) about 8%.

Granted while the space protection overhead might be more apples to apples with the earlier examples to XDP, there are other differences. For example solutions such as XDP can be more tolerant to multiple drive failures with faster rebuilds than some of the standard or basic RAID implementations. Thus more context and clarity would be helpful.

StorageIO would like see vendors including EMC along with startups who give data protection space overhead comparisons without context to do so (and applaud those who provide context). This means providing the context for data protection space overhead comparisons similar to performance metrics that matter. For example simply state with an asterisk or footnote comparing a 4+1 RAID 5 vs. a 25 drive erasure or forward error correction or dispersal or XDP or wide stripe RAID for that matter (e.g. can we get a side of context). Note this is in no way unique to EMC and in fact quite common with many of the smaller startups as well as established vendors.

General comments

My laundry list of items which for now would be nice to have’s, however for you might be need to have would include native replication (today leverages Recover Point), Advanced Format (4KB) support for servers (Updated 11/15/13 Per above, EMC has confirmed that host/server-side (front-end) AF along with 512 byte emulation modes exist today), as well as SSD based drives, DIF (Data Integrity Feature), and Microsoft ODX among others. While 12Gb SAS server to X-Brick attachment for small in the cabinet connectivity might be nice for some, more practical on a go forward basis would be 40GbE support.

Now let us see what EMC does with XtremIO and how it competes in the market. One indicator to watch in the industry and market of the impact or presence of EMC XtremIO is the amount of fud and mud that will be tossed around. Perhaps time to make a big bowl of popcorn, sit back and enjoy the show…

Ok, nuff said (for now).

Cheers

Gs

Greg Schulz – Author Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press) and Resilient Storage Networks (Elsevier)

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO LLC All Rights Reserved