![]()

Can RAID extend nand flash SSD life?

Imho, the short answer is YES, under some circumstances.

There is a myth and some FUD that RAID (Redundant Array of Independent Disks) can shorten the life durability of nand flash SSD (Solid State Device) vs. HDD (Hard Disk Drives) due to extra IOP’s. The reality is that depending on how configured, RAID level, implementation and other factors, nand flash SSD can be extended as I discuss in this here video.

Nand flash SSD cells and wear

First, there is a myth that nand flash SSD does not have moving parts like hard disk drives (HDD’s) thus do not wear out or break. That is just a myth in that nand flash by its nature wears out with write usage. This is due to how they store data in cells that have a rated number of program erase (P/E) cycles that vary by type of medium. For example, Single Level Cell (SLC) has a longer P/E life duration vs. Multi-Level Cells (MLC) and eMLC that stack multiple cells together.

There are a number of factors that contribute to nand flash wear, also known as duty cycle or durability tied to P/E. For example, some storage systems or controllers do a better job both at the lower level flash translation layer (FTL) in addition to controllers, firmware, caching using DRAM and IO optimization such as write ordering or grouping.

Now what about this RAID and SSD thing?

Ok first as a recap keep in mind that there are many RAID levels along with variations, enhancements and where, or how implemented ranging from software to hardware, adapters to controllers to storage systems.

![]()

In the case of RAID 1 or mirroring, just like replication or other one to one or one too many copy operation a write to one device is echoed to another. In the case of RAID 5, data is spread across drives and parity; however, the parity is rotated across all drives in an equal manner.

Some FUD or myths or misunderstandings come into play is that not all RAID 5 implementations as an example are not the same. Some do a better job of buffering or caching data in battery protected mirrored DRAM memory until a full stripe write can occur, or if needed, a partial write.

Another attribute is the chunk or shard size (how much data is sent to each drive member) along with the stripe width (how many drives). Some systems have narrow stripes of say 3+1 or 4+1 or 5+1 while others can be 14+1 or 15+1 or wider. Thus, data can be written across a wider number of drives reducing the P/E consumption or use of a single drive depending on implementation.

How about RAID 6 (dual parity)?

Same thing, it is a matter of how well the implementation is, how the write gathering is done and so forth.

What about RAID wearing out nand flash SSD?

While it is possible that it has or can occur depending on type of RAID implementation, lack of caching or optimization, configuration, type of SSD, RAID level and other things, in general I will say myth busted.

Want some proof?

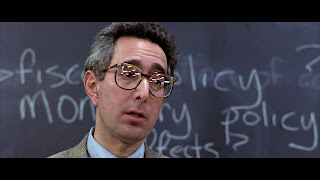

I could go through a long technical proof point and citing lots of facts, figures, experts and so forth leaving you all silenced and dazed similar to the students listening to Ben Stein in Ferris Buelers day off (Click here to see what I mean) asking “anybody anybody Buleler?

Image via nostagjicmoviesandthings.blogspot.com

How about some simple SSD and storage math?

On a very conservative basis, my estimate is that around 250PB of nand flash SSD drives are shipped and installed on a revenue basis attached to or in storage systems and appliances. Combine what Dell + DotHill + EMC + Fujitsu + HDS + HP + IBM (including TMS) + NEC + NetApp + NEC + Oracle among other legacy along with new all flash as well as hybrid vendors (e.g. Cloudbyte, FusionIO (Via their Nexgen acquisition), Kaminario, Greenbytes, Nutanix or Nimble, Purestorage, Starboard or Solidfire, Tegile or Tintri, Violin or Whiptail among others).

It is also a safe assumption based on how customers configure and use those and other storage systems is with some form of RAID. Thus if things were as bad as some researchers were, vendors and their pundits have made them out to be, wouldn’t’t we be hearing of those issues?

Is it just a RAID 5 problem and that RAID 6 magically corrects the problem?

Well, that depends on apples to apples vs. apples to oranges comparisons.

For example if you are using a 14+2 (16 drive) RAID 6 to compare to say a 3+1 (4 drive) RAID 5 that is not a fair comparison. Granted, it is a handy one if you are a vendor that supports wider RAID groups, stripes and ranks vs. those who do not. However also keep in mind that some legacy vendors actually also support wide stripes and RAID groups.

So in some cases the magic is not in the RAID level, rather the implementation or how configured or lack thereof.

Watch this TechTarget produced video recorded live while I was at EMCworld 2013 to learn more.

Otherwise, ok, nuff said (for now).

Cheers

Gs

Greg Schulz – Author Cloud and Virtual Data Storage Networking (CRC Press), The Green and Virtual Data Center (CRC Press) and Resilient Storage Networks (Elsevier)

twitter @storageio

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2026 Server StorageIO and UnlimitedIO LLC All Rights Reserved