Marketers particular those involved with anything resembling Solid State Devices (SSD) will tell you SSD is the future as will some researchers along with their fans and pundits. Some will tell you that the future only has room for SSD with the current flavor de jour being nand flash (both Single Level Cell aka SLC and Multi Level Cell aka MLC) with any other form of storage medium (e.g. Hard Disk Drives or HDD and tape summit resources) being dead and to avoid wasting your money on them.

Of course others and their fans or supporters who do not have an SSD play or product will tell forget about them, they are not ready yet.

Then there are those who take no sides per say, simply providing comments and perspectives along with things to be considered that also get used to spin stories for or against by others.

For the record, I have been a fan and user of various forms of SSD along with other variations of tiered storage mediums using them for where they fit best for several decades as a customer in IT, as a vendor, analyst and advisory consultant. Thus my perspective and opinion is that SSDs do in fact have a very bright future. However I also believe that other storage mediums are not dead yet although their roles are evolving while their technologies continue be developed. In other words, use the right technology and tool, packaged and deployed in the best most effective way for the task at hand.

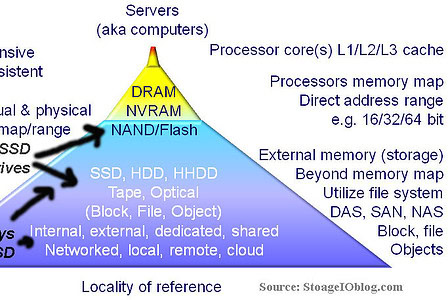

Memory and tiered storage hierarchy

Consequently while some SSD vendors, their fans, supporters, pundits and others might be put off by some recent UCSD research that does not paint SSD and particular nand flash in the best long-term light, it caught my attention and here is why. First I have already seen in different venues where some are using the research as a tool, club or weapon against SSD and in particular nand flash which should be no surprise. Secondly I have also seen those who don’t agree with the research at best dismiss the findings. Others are using it as a conversation or topic piece for their columns or other venues such as here.

The reason the UCSD research caught my eye was that it appeared to be looking at how will nand SSD technology evolve from where it is today to where it will be in ten years or so.

While ten years may seem like a long time, just look back at how fast things evolved over the past decade. Granted the UCSD research is open to discussion, debate and dismissal as clear in the comments of this article here. However the research does give a counter point or perspective to some of the hype which can mean somewhere between the two extremes, exists reality and where things are headed or need to be discussed. While I do not agree with all the observations or opinions of the research, it does give stimulus for discussing things including best practices around deployment vs. simply talking about adoption.

It has taken many decades for people to become comfortable or familiar with the pros and cons of HDD or tape for that matter.

Likewise some are familiar with (good or bad) with DRAM based SSD of earlier generations. On the other hand, while many people use various forms of nand flash SSD ranging from what is inside their cell phone or SD cards for cameras to USB thumb drives to SSD on drives, on PCIe cards or in storage systems and appliances, there is still an evolving comfort and confidence level for business and enterprise storage use. Some have embraced, some have dismissed, many if not most are intrigued wanting to know more, are using nand flash SSD in some shape or form, while gaining confidence.

Part of gaining confidence is moving beyond the industry hype looking at and understanding what are the pros, cons and how to leverage or work around the constraints. A long time ago a wise person told me that it is better to know the good, bad and ugly about a product, service or technology so that you could leverage the best, configure, plan and manage around the bad to avoid or minimized the ugly. Based on that philosophy I find many IT customers and even some VARs and vendors wanting to know the good, the bad and they ugly not for hanging out a vendor or their technology and products, rather so that they can be comfortable in knowing when, where, why and how to use to be most effective.

Granted to get some of the not so good information may need NDA (Non Disclosure Agreement) or other confidentially discussions as after all, what vendor or solution provider wants to show or let anything less than favorable out into the blogosphere, twittersphere, googleplus, tabloids, news sphere or other competitive landscapes venues.

Ok, lets bring this back to the UCSD research report titled The Bleak Future of NAND Flash Memory

Click here or on the above image to read the UCSD research report

I’m not concerned that the UCSD research was less than favorable as some others might be, after all, it is looking out into the future and if a concern, provides a glimpse of what to keep an eye on.

Likewise, looking back, the research report could be taken as simply a barometer of what could happen if no improvements or new technologies evolve. For example, the HDD would have hit the proverbial brick wall also known as the super parametric barrier many years ago if new recording methods and materials had not been deployed including a shift to perpendicular recording, something that was recently added to tape.

Tomorrows SSDs and storage mediums will still be based on nand flash including SLC, MLC, eMLC along with other variants not to mention phased change memory (PCM) and other possible contenders.

Todays SSDs have shifted from being DRAM based with HDD or even flash-based persistent backing storage to nand flash-based, both SLC and MLC with enhanced or enterprise MLC appearing. Likewise the density of SSDs continue to increase meaning more data packed into the same die or footprint, more dies stacked in a chip package to boost capacity while decreasing cost. However what is also happening is behind the scenes which is a big differentiator with SSDs and that is the quality of some firmware and low-level page management at the flash translation layer (FTL). Hence they saying that anybody with a soldering iron and ability to pull together off the shelves FTLs and packaging can create some form of an SSD. How effective a product will be is based on the intelligence and robustness of the combination of the dies, FTL, controller and associated firmware and device drivers along with other packaging options plus the testing, validation and verification they undergo.

Various SSD locations, types, packaging and usage scenario options

Good SSD vendors and solution providers I believe will be able to discuss your concerns around endurance, duty cycles, data integrity and other related topics to set up confidence with current and future issues, granted you may have to go under NDA to gain that insight. On the other hand, those who feel threatened or not able or interested in addressing or demonstrating confidence for the long haul will be more likely to dismiss studies, research, reports, opinions or discussions that dig deeper into creating confidence via understanding of how things work so that customers can more fully leverage those technologies.

Some will view and use reports such as the one from UCSD as a club or weapon against SSD and in particular against nand flash to help their cause or campaign while others will use it to stimulate controversy and page hit views. My reason for bringing up the topic and discussion it to stimulate thinking and help increase awareness and confidence in technologies such as SSD near and long-term. Regardless of if your view is that SSD will replace HDD, or that they will continue to coexist as tiered storage mediums into the future, gaining confidence in the technologies along with when, where and how to use them are important steps in shifting from industry adoption to customer deployment.

What say you?

Is SSD the best thing and you are dumb or foolish if you do not embrace it totally or a fan, pundit cheerleader view?

Or is SSD great when and where used in the right place so embrace it?

How will SSD continue to evolve including nand and other types of memories?

Are you comfortable with SSD as a long term data storage medium, or for today, its simply a good way to discuss performance bottlenecks?

On the other hand, is SSD interesting, however you are not comfortable or have confidence with the technology, yet you want to learn more, in other words a skeptics view?

Or perhaps the true cynic view which is that SSD are nothing but the latest buzzword bandwagon fad technology?

Ok, nuff said for now, other than here is some extra related SSD material:

SSD options for Virtual (and Physical) Environments: Part I Spinning up to speed on SSD

SSD options for Virtual (and Physical) Environments, Part II: The call to duty, SSD endurance

Part I: EMC VFCache respinning SSD and intelligent caching

Part II: EMC VFCache respinning SSD and intelligent caching

IT and storage economics 101, supply and demand

2012 industry trends perspectives and commentary (predictions)

Speaking of speeding up business with SSD storage

New Seagate Momentus XT Hybrid drive (SSD and HDD)

Are Hard Disk Drives (HDDs) getting too big?

Industry adoption vs. industry deployment, is there a difference?

Data Center I/O Bottlenecks Performance Issues and Impacts

EMC VPLEX: Virtual Storage Redefined or Respun?

EMC interoperability support matrix

Cheers

gs

Greg Schulz – Author Cloud and Virtual Data Storage Networking (CRC Press, 2011), The Green and Virtual Data Center (CRC Press, 2009), and Resilient Storage Networks (Elsevier, 2004)

twitter @storageio

All Comments, (C) and (TM) belong to their owners/posters, Other content (C) Copyright 2006-2012 StorageIO and UnlimitedIO All Rights Reserved

![]()